Authors’ Cut—Gear up! Exploring the Broader Observability Ecosystem of Cloud-Native, DevOps, and SRE

You know that old adage about not seeing the forest for the trees? In our Authors’ Cut series, we’ve been looking at the trees that make up the observability forest—among them, CI/CD pipelines, Service Level Objectives, and the Core Analysis Loop. Today, I’d like to step back and take a look at how observability fits into the broader technical and cultural shifts in technology: cloud-native, DevOps, and SRE.

By: Liz Fong-Jones

You know that old adage about not seeing the forest for the trees? In our Authors’ Cut series, we’ve been looking at the trees that make up the observability forest—among them, CI/CD pipelines, Service Level Objectives, and the Core Analysis Loop. Today, I’d like to step back and take a look at how observability fits into the broader technical and cultural shifts in technology: cloud-native, DevOps, and SRE.

If you want to go deeper after reading this, check out chapter 4 of our O’Reilly book or watch the full webinar where George, Fred, and I riff on the implications of observability for practitioners working in SRE, DevOps, and the cloud-native space. As a bonus, Fred shares how observability shapes Honeycomb’s own practices by digging into a chaotic engineering experiment we did recently. Fun!

Feeding the (cloud-native) beast

Let’s start with cloud-native—what even is it? As practitioners, cloud-native is the thing that enables us to deliver faster by allowing us to deploy microservices as containerized workloads. But one thing that cloud-native doesn’t do is eliminate complexity—it just shifts it from the application developer and the end user to the platform developer. Fortunately, platform developers are exactly the people best positioned to handle this complexity by building in observability practices from the very beginning and evolving them over time.

To take the ecosystem metaphor a bit further, cloud-native systems are like living ecosystems, and they require proper care. No one wants to unexpectedly face a hungry bear at their camp site. Observability helps us trace all of the bear’s recent interactions and ensure it’s able to consistently find food in the wild, rather than treating the problem in isolation.

What about the humans?

Since the 2000s, it’s become a growing priority for engineering leads to ensure that their teams can sustainably and humanely operate systems as they grow in complexity. While site reliability engineering (SRE) emerged into the mainstream in the mid-2010s from the work of Google, the DevOps movement and the idea of shifting left also developed in parallel. It’s a key focus of both the SRE and DevOps movements to understand production systems and tame the complexity beast, so it’s natural for them to care about observability.

What does that mean in practice? At Honeycomb, all of our engineers care about cultivating and maintaining observability. And while it’s important to have data and systems to produce it, what we really care about is what we can do with the data. Things like debugging, incident review and analysis, and high-level pattern detection are enabled by high-cardinality and high-dimensionality analysis.

Oh yes, and the humans. When we talk about observability, we’re not just talking about technical data. We’re also talking about the ability of us humans to understand what happened in the system and communicate it to other humans.

Here’s an example: In December 2021, we had a major outage. Of course, we had lots of charts showing the data, but to be honest, none of them were useful in isolation. Their real value was in sparking a group chat where we could all look at the data, tweak each other’s queries, and refine them in a “Oh we’re looking at it this way, have you tried this angle? If you add this thing, you get something different,” kind of way. Observability means you don’t have to know which questions to ask to be able to find the answer.

Social debugging 1, Council of the Dashboards* 0.

(*That thing where one person owns all the dashboards, crafts all the queries, and serves as the oracle that interprets everything for everyone. And, when they leave, the system falls apart.)

Chaos can be fun!

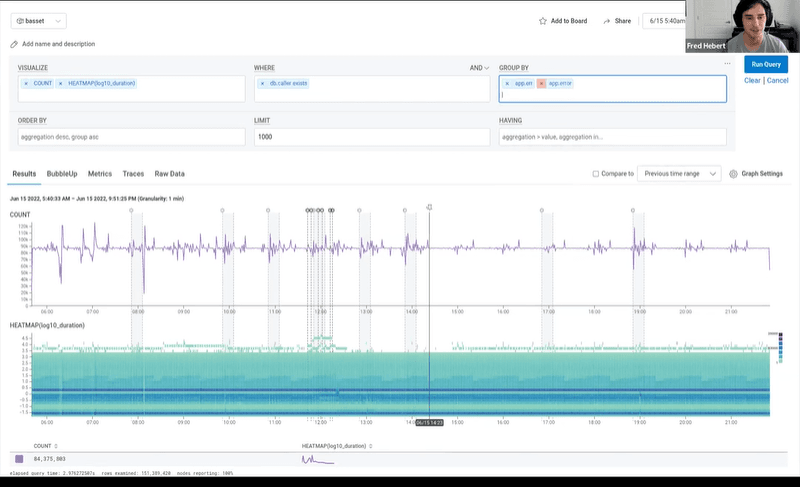

Want to see observability in action? Check out the webinar for a chaos experiment conducted by our very own SRE, Fred Hebert. Here’s just a glimpse of what he has in store:

And, if you want to learn more about how observability supercharges things like feature flagging, progressive release patterns, and incident analysis/blameless postmortems, check out our O’Reilly book. Now, some sad news: this is the last in our Author’s Cut series. But don’t feel forlorn, we have more good stuff coming. And if you missed any of our prior chats, you can find them here. If you’re feeling inspired to give Honeycomb a try, sign up to get started with modern observability.

Want to know more?

Talk to our team to arrange a custom demo or for help finding the right plan.